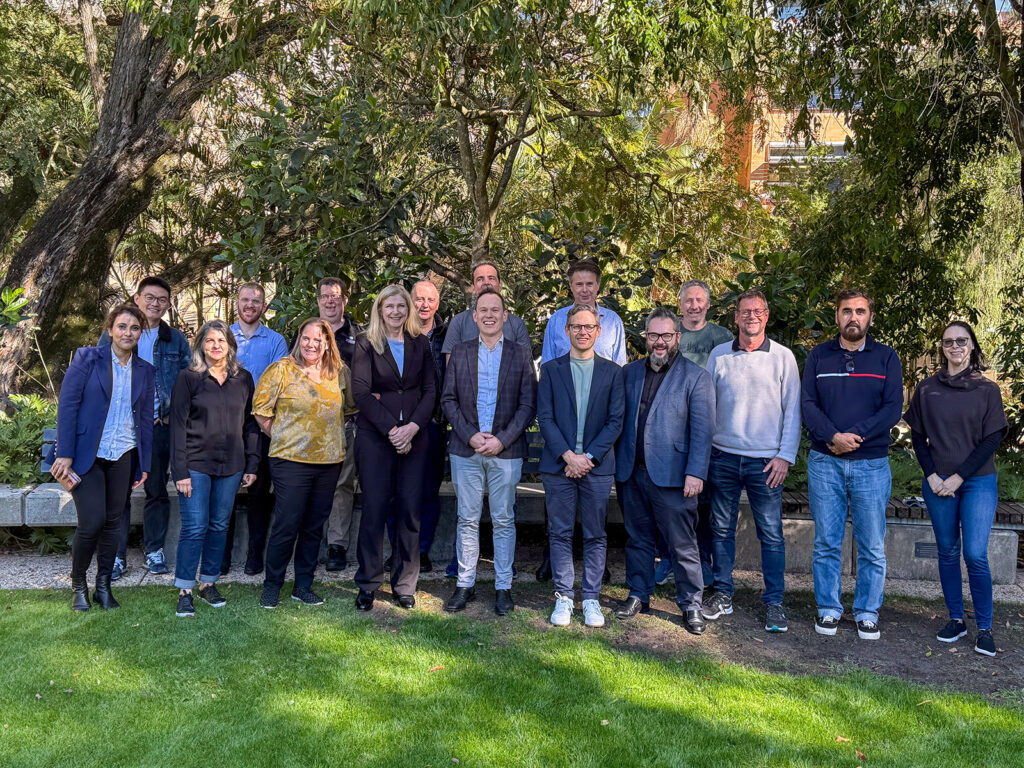

Cross-disciplinary research fosters innovation by integrating diverse perspectives, leading to more holistic and impactful solutions. Complex global problems rarely fit neatly within disciplinary boundaries, and collaboration across fields is essential to address challenges that no single discipline can solve alone. As they say “Teamwork Makes The Dream Work”!

On 15 July, CIRES hosted a Q&A discussion at The University of Queensland as part of our Lunch & Learn series with guest speakers Professor Xue Li, Dr Aneesha Bakharia, and Dr Avijit Sengupta. These UQ experts span Computer Science, AI, and Business Information Systems, and key application areas of Education, Health, and Technology. They shared insights into:

- what makes cross-disciplinary research successful,

- how to build and sustain collaborative teams,

- how students can benefit from this approach in both academic and industry pathways.

They also discussed how different aspects of cross-disciplinary research, including collaboration, ethics, and decision-making, could be transformed with GenerativeAI proliferation.

“It was inspiring to hear from researchers across disciplines sharing not only their successes but also the real challenges of collaboration. Cross-disciplinary research pushes us to rethink assumptions and explore unexpected connections.” – Dr Zixin Wang, CIRES Postdoctoral Research Fellow.

“I learned that successful cross-disciplinary research depends on firstly understanding the specific and most important problems and needs of other domains, to effectively apply one’s expertise. For junior researchers, this involves strategically focusing on a publishable core contribution, ensuring clear communication, and prioritising critical aspects, like data quality, and incorporating a human in the loop, for responsible system deployment.” – Dr Javad Pool, CIRES Postdoctoral Research Fellow.

“Attending the session provided me with insights into the complexity of cross-disciplinary research and valuable lessons from experienced researchers. My main takeaways are ensuring we solve problems faced by domain experts and be ready to learn different things!” – Nova Sepadyati, UQ PhD Researcher

Huge thanks to our speakers for sharing their valuable experience with the group and to our CIRES Postdoc Team – Stanislav Pozdniakov, Javad Pool, Zixin Wang, and Xuwei (Ackesnal) Xu – for organising such a thought-provoking session!